Our design chapter has been writing about system fidelity: moving fast without quietly breaking the system you’ll need to maintain tomorrow.

This post is the engineering sibling: what happens when you apply the same “Generate → Consolidate” logic to code, workflows, and shipping.

If you haven’t read the design piece yet, start here: Claude Code vs V0 for Design Engineering: how to prototype in code without breaking your design system.

The why (before the how)

We’re investing in a more opinionated Claude Code way of working for one simple reason:

AI makes it easy to create output. It does not automatically make that output shippable.

In practice, teams hit three predictable bottlenecks:

- Consistency: changes drift from patterns (style, architecture, security, accessibility, naming, conventions).

- Verification: “looks good” is not a test suite, and green CI isn’t the same as “safe in production”.

- Coordination: the hardest part is not writing code, it’s keeping shared context, decisions, and standards coherent across a team.

So our goal isn’t “let Claude write more code”.

It’s: shorten loops while keeping quality and risk under control.

This is also what we admire in how Intercom approaches it: they treat the agent setup as engineering infrastructure, not a personal productivity hack.

A quick primer (for non-engineers, and for engineers who like nouns)

When we say “Claude Code OS”, we mean a few concrete building blocks:

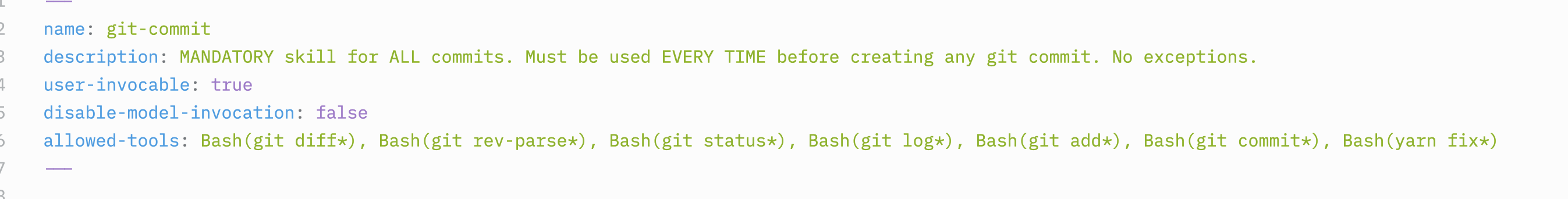

- Skills: packaged instructions + references + scripts. Think “mini playbooks” Claude can invoke.

- Hooks: rules that run before/after actions (e.g., block dangerous commands, auto-run checks).

- Progressive disclosure: Claude doesn’t load the whole universe; it pulls the right files at the right time.

- Verification: automated ways to prove a change works (tests, smoke flows, assertions, observability checks).

- Governance: ownership, versioning, and discoverability so the system scales beyond one power user.

If that sounds like process: correct.

The alternative is accidentally scaling chaos.

1) From prompts to institutional knowledge: skills as culture, not snippets

A good skill is not “a markdown file”.

It’s a folder with:

- a clear intent (“when to use this”)

- examples and gotchas

- optional scripts for repeatable work

- a verify step (“how we know it worked”)

At ~50–100 skills you’re no longer “configuring Claude”.

You’re codifying your engineering culture.

Our current buckets (where ROI actually shows up)

- Repo / library reference: “how we do X here” + gotchas

- Product verification: smoke flows, e2e drivers, assertions

- Data & observability: dashboards, query patterns, incident triage

- Automation: repeatable routines (release notes, dependency bumps, changelog)

- Review & quality: adversarial review, security checks, style guardrails

2) Context is expensive: progressive disclosure beats “load everything”

The anti-pattern is “stuff the prompt with everything we know”.

It feels safe, but it’s slow, costly, and increases instruction conflicts.

Pattern: thin start, thick when needed

- Start with minimal boot context (goal + constraints + Definition of Done)

- Use skills as entry points to deeper context

- Keep heavy reference docs in files Claude can open on demand (api.md, gotchas.md, examples/)

3) Versioning & governance: skill sprawl is real (and boringly fatal)

If skills become institutional knowledge, they need to be treated like code:

- ownership

- reviews

- changelog

- deprecations

- discoverability

What we’re experimenting with right now

- taxonomy prefixes per domain (

fe/,be/,ops/,data/) - a skill contract: intent, inputs/outputs, gotchas, verify step

- a weekly “skill release” cadence

- telemetry: usage logs to prune low-signal skills

4) Hooks are the real accelerator (and your safety net)

Hooks are one way to turn “best practices” into default behavior, but in practice, a lot of the guardrails live in your Claude Code config + skill-level allowlists.

In our workflow, this can show up as:

- Claude settings/config (e.g. settings files) that define defaults and constraints.

- Skill “allowed” config that limits which commands/tools a given skill can run (so safety is scoped by intent).

- Optional hooks where you want enforcement around actions (before/after), like blocking dangerous commands or automatically running checks.

The underlying jobs stay the same:

- Prevention: block risky actions (rm -rf, force push, destructive migrations)

- Verification: run checks after edits (lint/test/smoke)

We strongly prefer on-demand enforcement for highly restrictive modes (so it doesn’t annoy you all day), and default-on checks for always-useful verification.

5) “Fast lane” reviews: auto-approving safe PRs (with strict rules)

We’re exploring faster review loops, but only with an explicit risk model and it’s worth saying clearly: this is not fully “auto” yet in our current R&D.

Right now, the flow is closer to:

- a PM/designer/engineer manually triages what’s safe to pick up (often by tagging Claude in Slack)

- Claude drafts a PR

- the PR is still reviewed manually

“Fast lane” (auto-approval for truly low-risk changes) is a next phase once the workflow is no longer in beta.

Our proposal: 3 lanes

- Safe lane (auto-approve possible)

- docs, copy, non-prod config, refactors with no behavior change

- required: lint + unit tests + static analysis

- Normal lane

- feature work with limited blast radius

- required: reviewer + e2e smoke

- Critical lane

- auth, payments, permissions, data migrations, infra

- required: 2 reviewers + staged rollout + runbook

Feature flags are the bridge between speed and safety: ship small, validate in prod conditions, rollback without drama.

6) Read-only production introspection (soon): functional teams asking real questions safely

This is the most strategic unlock for us.

Imagine a world where PM/ops/CS can ask:

- “Did signups drop after yesterday’s release?”

- “Which cohort is failing onboarding?”

- “Is this issue limited to one tenant?”

…without raw production access.

What it requires (non-negotiables)

- allowlisted queries/commands

- rate limits

- PII redaction

- structured outputs (summaries, not dumps)

- audit trail

7) Failure modes (and how we prevent a haunted prompt mansion)

If we do this wrong, we’ll get:

- contradicting skills

- a bloated catalog nobody understands

- token waste

- false confidence (“green CI” ≠ safe behavior)

Our mitigations:

- one canonical skill per domain (single source of truth)

- deprecations that redirect

- progressive disclosure by default

- verification skills as first-class citizens

Conclusion

AI can accelerate delivery.

But only if you build the surrounding system: constraints, verification, and governance.

That’s what we’re building: a Claude Code OS that makes speed safe, and makes quality repeatable.

TBC ... 👀